What:

An evaluation for object detection models in Computer Vision. It measures whether something was correctly classified and how precisely.

Background:

First, it’s useful to know some background.

-

Precision: The fraction of correctly predicted objects out of all predicted objects. “Out of all the times we said positive, how many of those were true?”

-

Recall: The fraction of correctly predicted objects out of all actual objects. “Out of all of the data points that are actually positive, how many of them did we predict to be positive?”

-

Precision-Recall Curve:

- There’s an inherent tradeoff between precision and recall. If you set your model to be really confident (high precision), it’ll only output predictions it is really sure about (and may miss some true objects (low recall)). The inverse is also true.

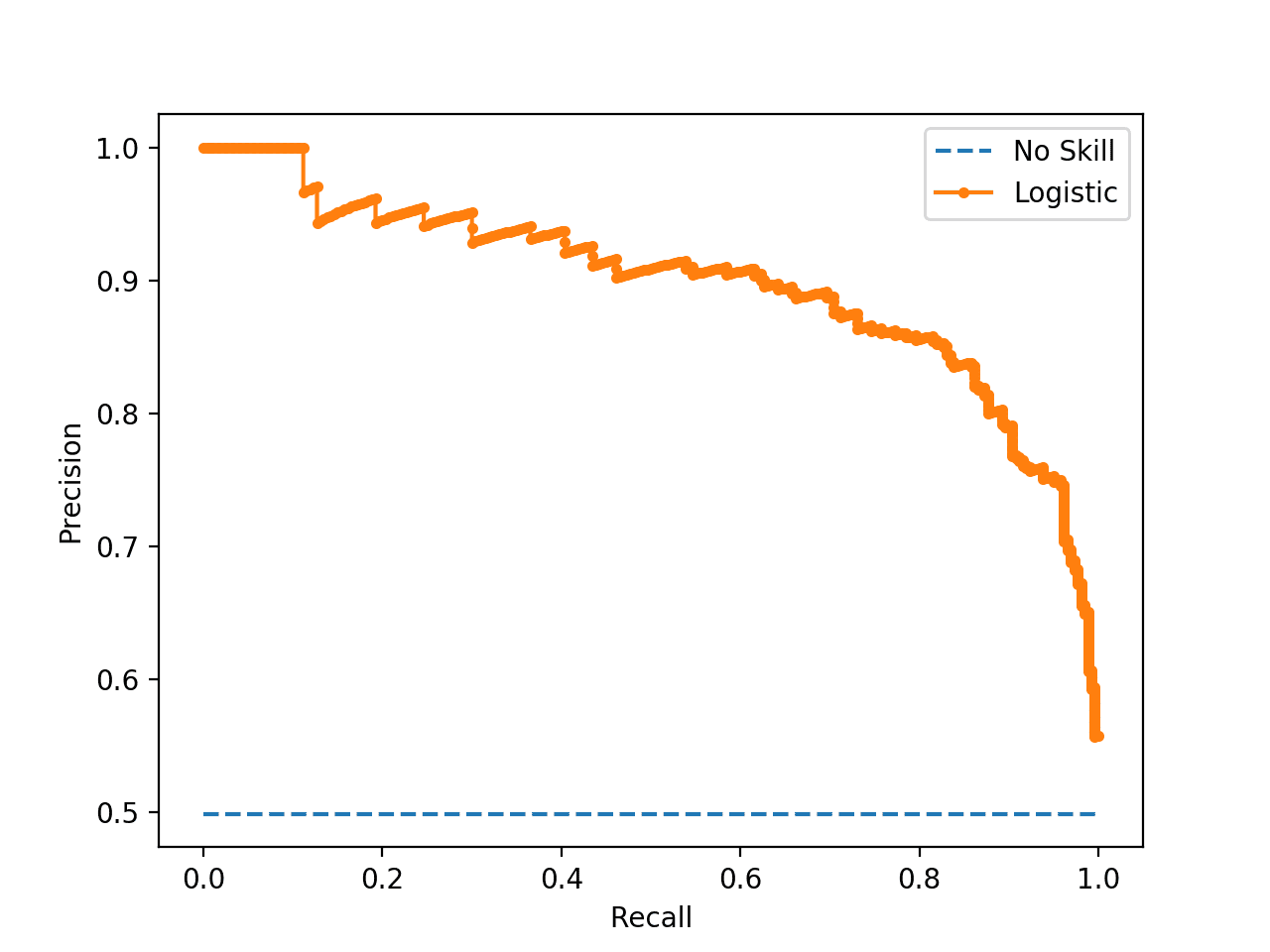

- Let’s adjust that hypothetical slider, and plot the precision and recall. We’ll get a graph like this (blue dotted line is predicting True the entire time):

-

Object Detection: When building a model to recognise an object, say dogs, the model returns:

- “There’s a dog at

[x1, y1, x2, y2]with 90% confidence” - “There’s another dog at

[x1', y1', x2', y2']with 78% confidence.” - etc.

- “There’s a dog at

-

IoU: A measure of measure of how well your predicted bounding box overlaps with the actual (ground truth) box. I.e. How much the prediction overlaps with the actual.

- To measure a prediction as True, we typically set an IoU threshold (e.g. 0.5). It essentially says that “The boxes must overlap by this much to consider it a match.”

Mean Average Precision:

- We take the model’s outputs and sort them by confidence.

- We evaluate them and compute precision and recall at each prediction.

- We then graph a Precision-Recall Curve of our model.

- We then calculate area under the curve. This is the Average Precision, and it gives us a single number summarising how good the model is at detecting that class across all images.

We then repeat that process for every class. We take the average AP, giving us the Mean Average Precision.